Graph Queries in a Unified Database: From Cypher to Posting Lists

The Polyglot Problem

Modern applications increasingly need to traverse relationships. Social networks need friends-of-friends queries. Fraud detection systems need to follow transaction chains. Recommendation engines need to walk purchase-and-preference graphs.

The conventional solution is straightforward: deploy a graph database alongside your relational database. Neo4j or Amazon Neptune handles the graph workload, while PostgreSQL handles everything else.

This works until it doesn't. The moment you need a query that spans both systems, you face a fundamental architectural problem:

- Data duplication: Entity data must exist in both the relational store and the graph store.

- Synchronization: Changes in one system must propagate to the other, typically through Kafka pipelines or CDC connectors.

- No cross-system transactions: An ACID guarantee that spans a relational INSERT and a graph MERGE does not exist in a polyglot architecture.

- Two query languages: Application code must context-switch between SQL and Cypher (or Gremlin), often for what is logically a single question.

We built Cognica to eliminate this boundary entirely.

Graph Operations as Posting Lists

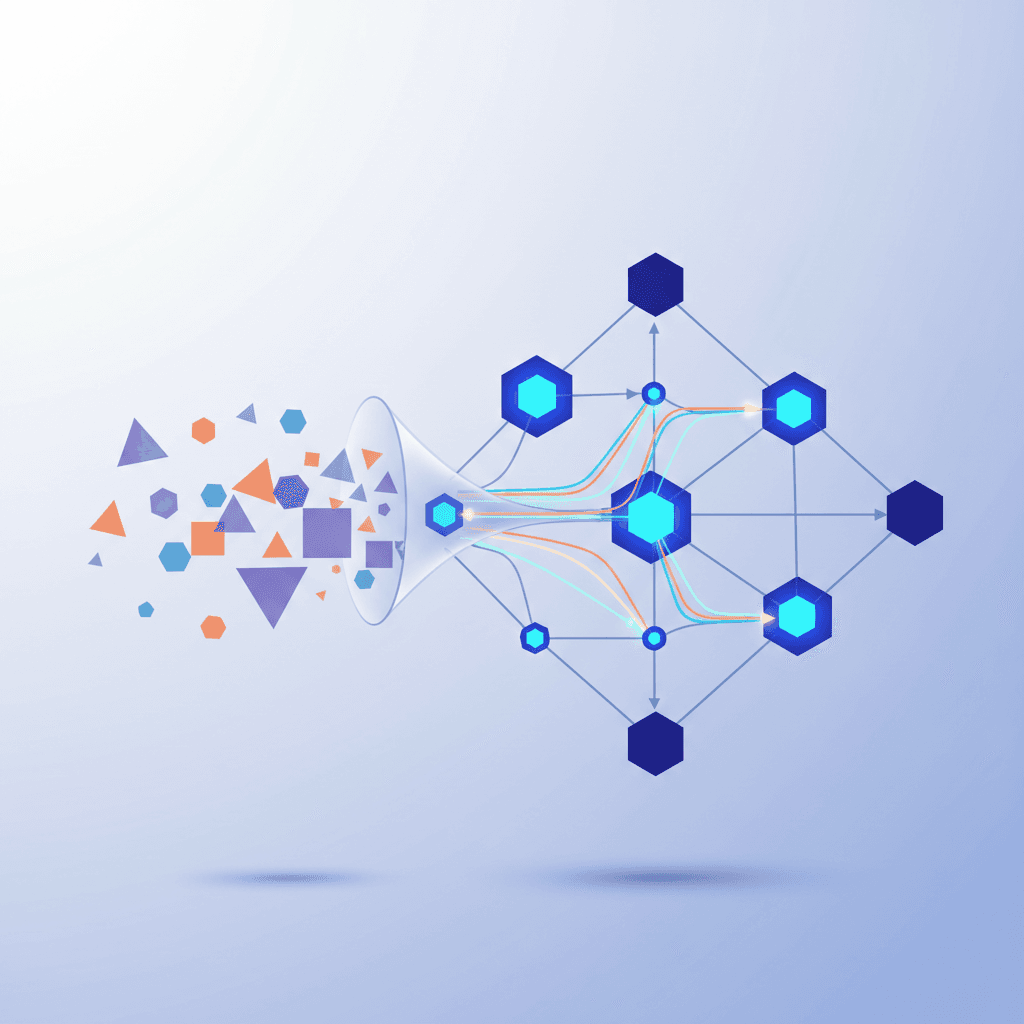

Cognica's engine is built on a single mathematical abstraction: the posting list. Every query paradigm — relational SQL, full-text search, vector similarity, and graph traversal — compiles to operations that produce and compose posting lists through Boolean algebra.

For graph queries, the key insight is that graph traversal results are structurally identical to document retrieval results: both are sets of entity IDs that satisfy some predicate.

| Operation | Input | Output |

|---|---|---|

WHERE age > 30 | Table scan | Posting list of matching row IDs |

WHERE content @@ 'database' | Inverted index | Posting list of matching doc IDs |

ORDER BY embedding <=> $vec | HNSW index | Posting list of nearest neighbor IDs |

MATCH (a)-[:FOLLOWS*1..3]->(b) | Adjacency traversal | Posting list of reachable vertex IDs |

Because every operation returns a posting list, they compose through the same Boolean operators: AND (intersection), OR (union), NOT (complement). A query that combines full-text search with graph traversal requires no special fusion logic — it is just two posting lists intersected.

Property Graph on Document Storage

Cognica stores graphs as pairs of document collections. A graph named social produces two collections:

social_nodes stores vertices:

{ "_id": "social:Person:1001", "_label": "Person", "_graph": "social", "name": "Alice", "age": 30, "department": "Engineering" }

social_edges stores edges:

{ "_id": "social:FOLLOWS:2001", "_source": "social:Person:1001", "_target": "social:Person:1002", "_type": "FOLLOWS", "_graph": "social", "since": "2024-01-15" }

This dual-collection model has a critical advantage: it reuses the entire document storage infrastructure. Indexes, ACID transactions, LSM-tree compaction, cursor iteration, and federation — all of it works on graph data without a single line of graph-specific storage code.

Composite indexes on (_source, _type) and (_target, _type) enable efficient adjacency lookups in both directions. An LRU adjacency cache further accelerates multi-hop traversals by keeping pre-computed neighbor relationships in memory.

Cypher Through Parse-Time Rewriting

Cognica supports Apache AGE-compatible Cypher syntax. But rather than building a separate graph query engine, we translate Cypher into SQL at parse time.

SELECT * FROM cypher('social', $$ MATCH (a:Person)-[:FOLLOWS*1..3]->(b:Person) WHERE a.name = 'Alice' RETURN b.name, b.role $$) AS (name TEXT, role TEXT);

When the SQL parser encounters the cypher() function in a FROM clause, it triggers a translation pipeline:

- Cypher Lexer — A hand-written scanner tokenizes pattern arrows (

-[:TYPE]->) and case-insensitive keywords. - Cypher Parser — Builds a Cypher-specific AST from the token stream.

- Cypher-to-SQL Rewriter — Transforms the Cypher AST into standard SQL AST nodes that reference graph table functions.

- Standard Optimizer — The rewritten SQL re-enters the same cost-based optimizer used for all other queries.

This design means the SQL optimizer has full visibility into the graph query structure. Predicate pushdown, join reordering, and index selection all work across graph-relational boundaries, because by the time optimization runs, there is no distinction between "graph operations" and "relational operations" — they are all SQL plan nodes.

Composing Paradigms in a Single Query

The real power emerges when graph operations compose with other paradigms. Consider a research paper discovery query that combines text matching, vector similarity, graph centrality, and relational filtering:

SELECT title, year, field, _score FROM papers WHERE fuse_log_odds( bayesian_match(title, 'attention'), knn_match(embedding, $1, 10), pagerank() ) AND year >= 2019 ORDER BY _score DESC LIMIT 5;

This single SQL statement:

- Searches paper titles using Bayesian BM25 probabilistic scoring

- Finds semantically similar papers via HNSW vector search

- Computes citation graph centrality through PageRank

- Fuses all three signals through log-odds conjunction

- Filters by publication year

- Returns the top 5 results

In a polyglot architecture, this query would require three separate systems, application-level score merging, and no transactional consistency. In Cognica, it is one query, one transaction, one optimizer.

Why This Matters

The unified approach eliminates an entire class of infrastructure:

- No sync pipelines between relational and graph stores

- No data duplication across systems

- No impedance mismatch between query languages

- ACID transactions spanning relational and graph operations

- Single optimizer that understands the full query structure

Graph queries are not a bolted-on feature. They are a natural consequence of the posting list algebra — one more operation that produces sets of IDs composable through Boolean logic, just like every other paradigm in the engine.

For teams building applications that need relationship-aware queries alongside traditional SQL, full-text search, or vector similarity, this means deploying one system instead of three or four, with no compromise on query expressiveness or transactional guarantees.